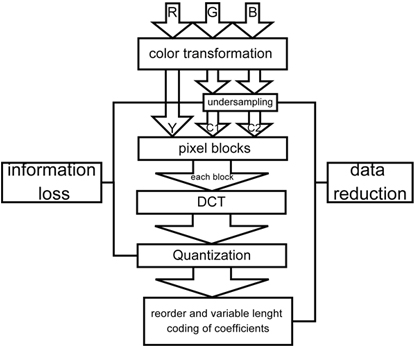

Nearly all digital cameras use a compression algorithm that is know as “JPEG-Compression”. How does this work?

The algorithm can be separated in different steps. We only show the steps for the compression, the de-compression works in the opposite order. We show only the most common compression: The lossy compression of 8bit RGB data. “Lossy” means, that the compression will also reduce some of the image content (in opposite to lossless compression).

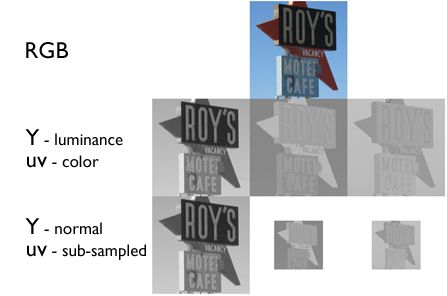

Color Conversion and Subsampling

Starting with the RGB data, this data is divided into the luminance and the color components. The data is converted to Yuv. This step does not reduce the amount of data as it just changes the representation of the same information. But as the human observer is much more sensitive to the intensity information than to the color information, the color information can be sub-sampled without a significant loss of visible image information. But of course the amount of data is reduced significantly.

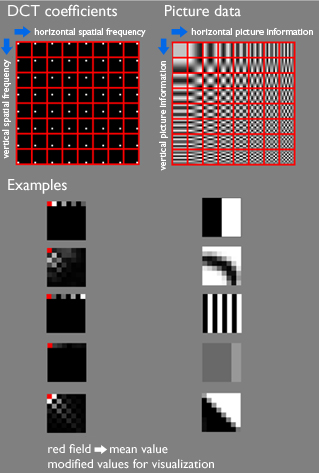

Block-Processing and DCT

The Yuv image data with the subsampled color components is then divided into 8x8pixel blocks. The complete algorithm is performed from now on on these pixel blocks. Each block is transformed using the discrete Cosines Transformation (DCT). What does this mean? In the spatial domain (so before we have transformed) the data is described via digital value for each pixel. So we represent the image content by a list of pixel number and pixel value. After the transformation, the image content is described by the coefficient of the spatial frequencies for vertical and horizontal orientation. So in the spatial domain, we need to store 64 pixel values. In the frequency domain, we have to store 64 frequency coefficients. No data reduction so far.

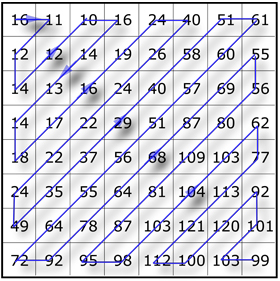

Quantization

To reduce the needed amount of data to store the 64 coefficients, these are quantized. Depending on the size of the quantization steps, more or less information is lost in this step. Most of the times, the user can define the strength of the JPEG compression. The quantization is the step where this user information has influence on the result (remaining image quality and file size). Reorder and variable length encoding

Reorder and variable length encoding

Depending on the quantization, more and more coefficients are reduced to zero. And the probability is very high, that these coefficients with value 0 are found in the higher frequencies rather than in the lower frequencies. So the data is reordered that way, that the values are sorted by the spatial frequency, first the low frequencies, high frequencies come last. After reordering, it is very likely, that we have some values at the beginning and then a lot of 0. Instead of storing “0 0 0 0 0 0 0 0 0 0”, we can easily store “10x 0”. That way, the amount of data is also reduced significantly.

Conclusion

The JPEG compression is a block based compression. The data reduction is done by the subsampling of the color information, the quantization of the DCT-coefficients and the Huffman-Coding (reorder and coding). The user can control the amount of image quality loss due to the data reduction by setting (or chose presets). For a high quality compression, the subsampling can be skipped and the quantization matrix can be selected that way, that the information loss is low. For high compression settings, the subsampling is turned on and the quantization matrix is selected to force most coefficients to 0. In that case, the image get clearly visible artifacts after decompression.

ua